Introducing Motion Capture at Protege

Structured Motion for Learning Systems

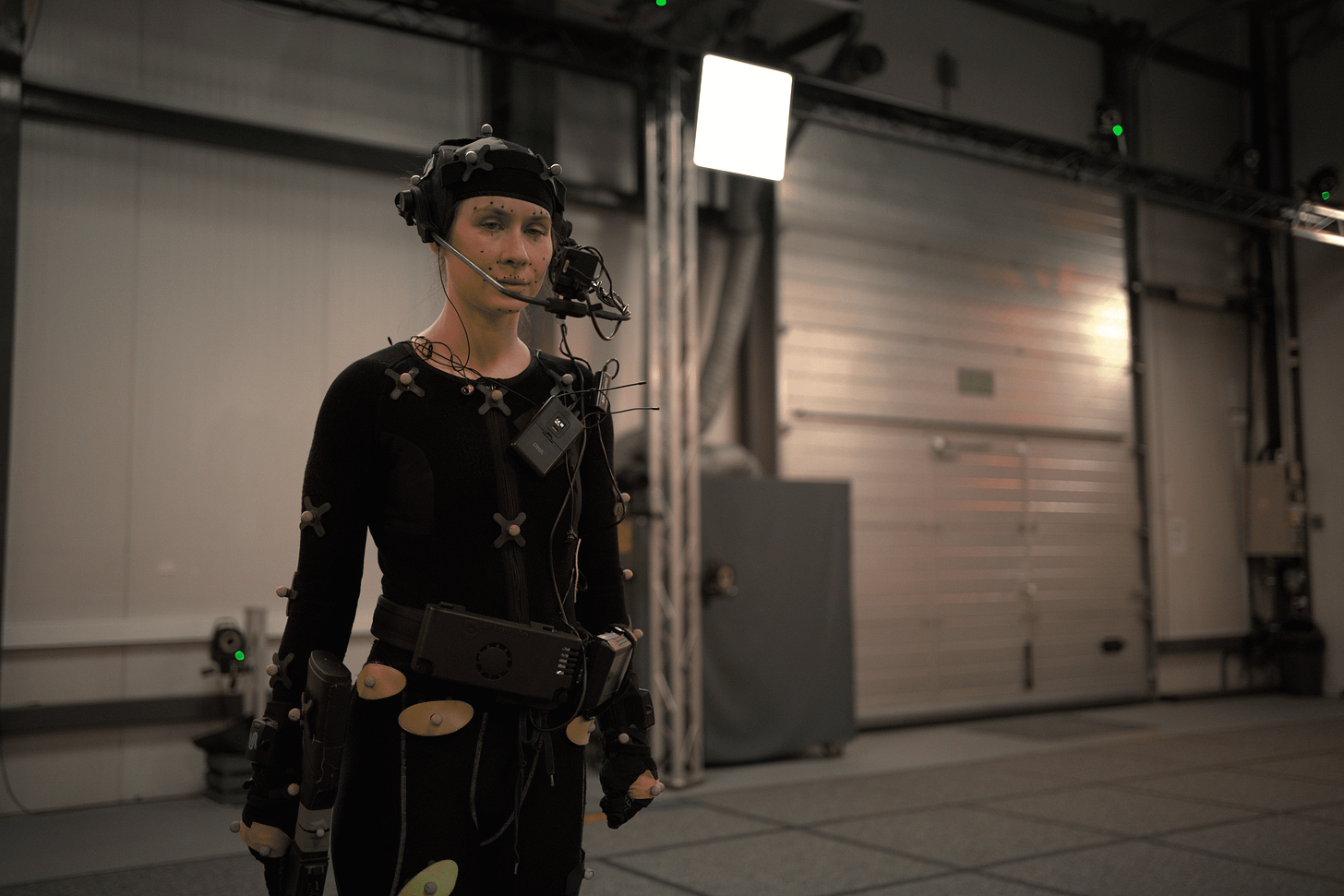

We’re announcing the launch of Protege’s newest vertical: motion capture data for AI training. Human motion is central to some of the most difficult technical frontiers: robotics, embodied agents, and physically grounded simulation.

Recent accelerations in motion capture training — notably the sequencing of motion events with LLMs — promise more complex and longer context motion model capabilities. However, for these advancements to scale, they require commensurate advancements in training data. In particular, they will require a vast increase in motion capture data volume and diversity, combined with multi-person data, quality annotations, and temporal alignment in training data.

We’ve partnered with studios, labs, and motion capture technology providers to surface datasets that are designed for AI training and are both technically rigorous and diverse enough to support model generalization in the physical world. That’s why we have curated a complementary blend of motion capture sources:

Thousands of hours of natural movement, capturing real-world variability and expressiveness at scale, including thousands of performers. In parallel, ultra precise studio-grade data offers rich semantic annotations from highly controlled, ground truth sessions featuring Vicon marker setups.

Filterable segments by actor profile, body part and limb, or motion type for flexible experimentation.

Multimodal training via motion paired with facial scans, thousands of hours of finger-tracking, reference video, and object and performer scans.

All of Protege motion capture has clear data provenance and all rights are ethically secured.

Motion Capture Training Complexity

Training AI models on motion capture data comes with unique challenges tied to data quality, representation, and generalization. While motion capture provides rich temporal and spatial signals, turning that into reliable model performance requires careful handling of input formats, motion dynamics, and physical realism. Some of the key obstacles:

Data diversity: Many datasets are designed for specific non-training use cases and contain narrow activities or miss contextual labels (e.g., emotion, intent, environment).

Modality misalignment: Synchronized audio, video, or environmental context supports multimodal training.

Motion realism vs. physics: Models may produce visually smooth motion that violates physical constraints (e.g., floating, limb distortion).

Representing joint rotations is tricky: There are several ways to describe how a body part is rotating, but each method has quirks that can cause errors, instability, or confusion for the model.

Lack of standard benchmarks: Objective evaluation across different tasks and datasets remains elusive.

Latency and stability: Real-time applications like games or robotics require fast, stable inference.

To explore our datasets or collaborate, visit withprotege.ai/motion-capture.